Active Learning in Machine Learning: Techniques for Efficiently Labeling Data

In this blog, you will discover the benefits of using active learning in your machine learning projects. Active learning is a powerful technique that allows a model to choose which examples it receives labels for, improving its efficiency and effectiveness. We will explore how active learning differs from traditional machine learning approaches, and provide examples of techniques you can use to efficiently label data in active learning. You will also learn about the benefits of using active learning and how to evaluate the effectiveness of different techniques.

Training a supervised machine learning model often requires a large amount of labeled data to achieve good results. However, it can be difficult for companies to provide data scientists with enough labeled data, which can be a major bottleneck in the data team’s work. When data scientists are given a large, unlabeled dataset, it can be challenging to train a high-performing model with it due to the time and resources required to manually label the data. In these cases, it may be necessary to find ways to efficiently label the data in order to train a good supervised model.

Active learning can help you label data efficiently when you have a large amount of data that needs to be labeled but don’t have the resources to label it all. This technique helps you prioritize which data to label first, making the labeling process more efficient and effective.

Table of Content

This article will cover the following topics:

- Importance of Active Learning in Machine Learning

- What is Active Learning?

- Steps in Active Learning

- How does Active Learning differ from Traditional Machine Learning?

- Benefits of using Active Learning:

- Techniques for Efficiently Labeling Data

- Choosing the Right Active Learning Technique

- How to evaluate the effectiveness of different active learning techniques?

- Conclusion

Best-suited Machine Learning courses for you

Learn Machine Learning with these high-rated online courses

Importance of Active Learning in Machine Learning

There is several reasons why active learning is important in machine learning:

- Improved model performance: Active learning can lead to an improved model performance by allowing the model to focus on the dataset’s most informative and relevant examples. This can result in better generalization to new data and more accurate predictions.

- Reduced labeling costs: Active learning can significantly reduce the labeled data required to train a model. The model can request labels for specific examples rather than passively feed a fully labeled dataset. This can be particularly useful when labeling data is expensive or time-consuming.

- Increased efficiency: Active learning can make the model training process more efficient by allowing the model to learn more effectively with fewer labeled examples. This can save time and resources and make it easier to deploy machine learning models in real-world scenarios.

- Improved learning curve: Active learning can lead to a smoother learning curve. The model can focus on the most important and informative examples rather than being overwhelmed by many potentially irrelevant or redundant examples. This can make it easier to tune and optimize the model.

What is Active Learning?

Active learning is a machine learning approach in which a model can actively seek out and request labels for specific instances in a dataset rather than being passively fed a fully labeled dataset. This allows the model to focus on the most important and informative examples rather than being trained on many potentially irrelevant or redundant examples.

In simple terms, active learning is a way for a computer to learn about things by asking for help when it doesn’t know something. This can be more efficient and effective than just being given all the information upfront, as the computer can focus on the most important and relevant examples. Active learning is often used when labeling data is expensive or time-consuming, allowing the computer to make the most of the available labeled data.

Understand Active Learning with Example

Imagine that you are building a machine learning model to classify customer reviews as either positive or negative. You have a large dataset of customer reviews, but labeling the data is expensive and time-consuming, as it requires hiring human annotators to read and classify each review.

Using active learning, you could train a model to classify the reviews by starting with a small number of labeled examples and gradually adding more as the model becomes more confident in its predictions. The model could request labels for specific examples that it is uncertain about, allowing it to learn more effectively and achieve better performance with fewer labeled examples.

In this scenario, active learning would allow you to train a high-quality model more efficiently, reducing the time and cost of labeling the data and making it easier to deploy the model in a real-world setting.

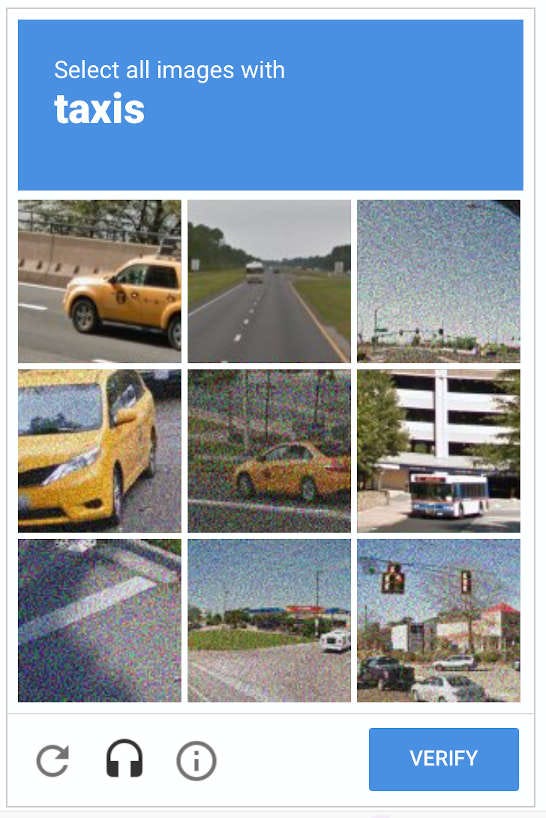

Do you remember verifying yourself as a human by selecting pictures?

Asking a user to select images of cars in a captcha for verification could be considered a form of active learning, as it involves the computer actively selecting examples for labeling. In this case, the computer is presenting the user with a series of images and asking the user to label the ones that contain cars. By actively selecting examples for the user to label, the computer is able to learn more effectively and improve its ability to distinguish between different types of images.

However, this type of captcha training is typically used for a different purpose than traditional active learning in machine learning. While traditional active learning is often used to improve the performance of a machine learning model, captcha training is typically used to improve the security of a system by identifying and blocking automated or malicious inputs. Nonetheless, the underlying principles of active learning are still at play in captcha training, as the computer is actively selecting examples for labeling in order to learn more effectively.

Steps in Active Learning

Here is an example of how to perform active learning step by step:

- Collect a dataset of unlabeled examples: The first step in active learning is to collect a dataset of unlabeled examples that the model will be able to request labels for. This dataset should represent the types of examples the model will encounter in the real world, and should be as large as possible to give the model a wide range of examples to choose from.

- Train an initial model: Next, you will need to train an initial model using a small number of labeled examples. This model will be used to make predictions on the unlabeled examples in the dataset and will serve as the starting point for the active learning process.

- Identify the most uncertain or informative examples: The model will then use its current knowledge to identify the examples in the dataset that it is most uncertain about or that are most informative. These examples will be the ones that the model requests labels for.

- Request labels for the identified examples: Once the model has identified the most uncertain or informative examples, it will request labels for these examples from a human annotator or another source.

- Update the model with the new labeled examples: Once the model has received labels for the identified examples, it will use these labeled examples to update its knowledge and improve its accuracy. This process can be repeated as many times as necessary, with the model requesting labels for additional examples as it becomes more confident in its predictions.

Pseudo code for Implementing Active Learning

Here is a pseudo code for implementing active learning on a CSV data file in Python:

def active_learning(model, csv_file, label_function): # Read the csv file into a Pandas DataFrame df = pd.read_csv(csv_file) # Initialize an empty list to store the labeled data labeled_data = [] # Iterate over the rows of the DataFrame for index, row in df.iterrows(): # Extract the example from the row example = row[0] # Make a prediction using the model prediction = model.predict(example) # Calculate the uncertainty of the prediction uncertainty = calculate_uncertainty(prediction) # If the uncertainty is above a certain threshold, request a label for the example if uncertainty > threshold: label = label_function(example) labeled_data.append((example, label)) # Update the model with the labeled example model.update(example, label) # Return the labeled data return labeled_data

- In the above pseudo code, function called active_learning takes a model, a csv file, and a label function as input

- Reads csv file into a Pandas DataFrame

- Iterates over rows of DataFrame

- For each row:

- Extracts example and makes a prediction using the model

- Calculates uncertainty of prediction

- If uncertainty above threshold, requests label for example using label function and adds labeled example to labeled data list

- Updates model with labeled example

- Returns labeled data

This is a pseudo code for a calculate_uncertainty function used above:

def calculate_uncertainty(prediction): # Extract the probability of each class from the prediction probabilities = prediction["probabilities"] # Calculate the entropy of the prediction entropy = -sum(probabilities * np.log2(probabilities)) # Return the entropy as the uncertainty return entropy

- calculate_uncertainty function takes a prediction as input

- Calculates uncertainty of prediction by extracting probabilities of each class and calculating entropy

- Entropy calculated as negative sum of probabilities multiplied by logarithm of probabilities

- Higher entropy indicates more uncertain prediction

NOTE: Uncertainty could be calculated using other measures such as the maximum probability or the variance of the probabilities.

Pseudo code for label_function used previously:

def label_function(example): # Display the example to the user print("Please label the following example:") print(example) # Ask the user to provide a label label = input("Enter the label:") # Return the label return label

- In the above pseudo code, label_function takes an example as input

- Displays example to user

- Asks user to provide label for example

- Returns label

Note: This is just one way to implement a label function and different approaches may be used depending on the specific requirements of the task.

For example, the label function could be designed to present the example to a human annotator or to a crowd-sourcing platform, or it could be implemented to automatically label the example using another machine learning model.

How does Active Learning differ from Traditional Machine Learning?

| Parameters | Active Learning | Traditional Machine Learning |

|---|---|---|

| How examples are labeled | Model requests labels for specific examples | All examples are fully labeled upfront |

| Ability to choose which examples to receive labels for | Yes | No |

| Efficiency and effectiveness | Can be more efficient and effective, as model can focus on most important and informative examples | May be less efficient and effective, as model must learn from all examples regardless of relevance or importance |

| Cost-effectiveness | Can be more cost-effective, as it can reduce the amount of labeled data required | May be less cost-effective, as it requires a fully labeled dataset |

Overall, active learning allows a model to choose which examples it receives labels for, which can make it more efficient and effective, as well as more cost-effective in situations where labeling data is expensive or time-consuming. Traditional machine learning approaches, on the other hand, do not allow the model to choose which examples it receives labels for, and may be less efficient and effective, as well as less cost-effective.

Benefits of using Active Learning:

There are several benefits of using active learning in machine learning:

- Improved model performance: Active learning can lead to improved model performance by allowing the model to focus on the most informative and relevant examples in the dataset. This can result in better generalization to new data and more accurate predictions.

- Reduced labeling costs: Active learning can significantly reduce the amount of labeled data required to train a model, as the model can request labels for specific examples rather than passively feed a fully labeled dataset. This can be particularly useful when labeling data is expensive or time-consuming.

- Increased efficiency: Active learning can make the model training process more efficient by allowing the model to learn more effectively with fewer labeled examples. This can save time and resources and make it easier to deploy machine learning models in real-world scenarios.

- Improved learning curve: Active learning can lead to a smoother learning curve, as the model can focus on the most important and informative examples rather than being overwhelmed by many potentially irrelevant or redundant examples. This can make it easier to tune and optimize the model.

Techniques for Efficiently Labeling Data with Active Learning

- Pool-based sampling: The model is presented with a pool of unlabeled examples from which it can request labels. The model can choose which examples to request labels for based on its current level of uncertainty or the informativeness of the examples.

For example,

In the previous discussed example, labelling the large data of classifying customer reviews as either positive or negative is expensive and time-consuming. To improve the efficiency and effectiveness of the model training process, you decide to use active learning. Specifically, you use pool-based sampling, which allows the model to request labels for the most uncertain examples in the dataset. This might include reviews that contain ambiguous language or are written in a language the model is not familiar with. By focusing on the most informative and relevant examples, the model is able to learn more effectively and achieve better performance with fewer labeled examples. Using this approach allows you to train a high-quality model more efficiently. It reduces time and cost of labeling the data, making it easier to deploy the model in a real-world .

- Committee-based approaches: A committee of models is used to label examples in committee-based approaches. The committee members can use either label the examples independently and the labels are combined using a voting or averaging scheme, or the models can label the examples collaboratively, with each model providing feedback to the others.

For example,

Imagine you are building a machine-learning model to classify images of animals. You have a large dataset of images, but labeling the data is expensive and time-consuming. Using a committee-based approach, you could train a group of models to label the images independently and then combine the labels using a voting or averaging scheme. This would allow the models to label the examples collaboratively, with each model providing feedback to the others and improving the overall accuracy of the labeling process.

- Query-by-committee: Query-by-committee is a type of committee-based approach in which a committee of models is used to identify examples that are most likely to be misclassified by the committee as a whole. These examples are then labeled and used to update the models in the committee.

For example,

Imagine you are building a machine learning model to classify customer complaints as either urgent or non-urgent. You have a large complaints dataset, but labeling the data is expensive and time-consuming. Using query-by-committee, you could train a committee of models to label the complaints and identify the examples that are most likely to be misclassified by the committee. These examples would then be labeled and used to update the committee’s models, allowing it to learn more effectively and improve its overall accuracy.

These techniques can label data efficiently in active learning, allowing the model to focus on the most important and informative examples and improve its performance.

Choosing the Right Active Learning Technique

- Type of data: Active learning techniques may be more suitable for different data types, such as text, images, or audio. It is important to consider the characteristics of your data when selecting an active learning technique.

- Labeling cost: The cost of labeling data can be a significant factor in the active learning process. Techniques that require fewer labeled examples may be more suitable in scenarios where labeling data is expensive or time-consuming.

- Model performance: Different active learning techniques may lead to different levels of model performance. It is important to consider the desired level of accuracy and how much time and resources you are willing to invest in the active learning process when selecting a technique.

- Availability of annotators: Some active learning techniques may require the use of human annotators to label the data, while others may be able to use automated or semi-automated labeling approaches. It is important to consider the availability of annotators when selecting a technique.

- Scalability: Active learning techniques may differ in their scalability, or ability to handle large datasets. It is important to consider the size of your dataset and the resources available when selecting a technique.

How to Evaluate the Effectiveness of Different Active Learning Techniques?

Here are some key points to consider when evaluating the effectiveness of different active learning techniques:

- Model performance: Measure the accuracy, precision, and recall of the model trained using each technique.

- Labeling cost: Compare the number of labeled examples required by each technique and calculate the cost of labeling them.

- Time required to train the model: Consider the time required to train the model using each technique.

- Human annotator agreement: If human annotators are used, measure the level of agreement among the annotators.

Conclusion

In conclusion, active learning is a valuable technique in machine learning that allows a model to choose which examples it receives labels for, improving its efficiency and effectiveness. Several techniques can be used to label data efficiently in active learning, including pool-based sampling, committee-based approaches, and query-by-committee. These techniques can significantly reduce the amount of labeled data required and make the model training process more efficient, resulting in improved model performance and reduced labeling costs.

When selecting an active learning technique, it is important to consider the type of data, the labeling cost, the desired level of model performance, the annotators’ availability, and the technique’s scalability. To evaluate the effectiveness of different active learning techniques, considering the model performance, labeling cost, the time required to train the model, and human annotator agreement.

Overall, active learning is a powerful tool that can greatly improve the efficiency and effectiveness of machine learning projects. We encourage readers to consider using active learning in their own machine-learning projects to improve the performance of their models and reduce the cost and time required to label data.

FAQs

What is Active Learning?

Active learning is a machine learning technique that allows a model to choose which examples it receives labels for, improving its efficiency and effectiveness. By requesting labels for the most uncertain or informative examples, the model can learn more effectively and achieve better performance with fewer labeled examples.

How is Active Learning different from traditional machine learning approaches?

In traditional machine learning approaches, the model is given a fixed dataset with pre-labeled examples, and it uses this dataset to learn about the problem. In contrast, active learning allows the model to request labels for specific examples, allowing it to focus on the most uncertain or informative examples and learn more effectively with fewer labeled examples.

What are some techniques for efficiently labeling data in Active Learning?

There are several techniques that can be used to efficiently label data in active learning, including pool-based sampling, committee-based approaches, and query-by-committee. These techniques can significantly reduce the amount of labeled data required and make the model training process more efficient.

What are the benefits of using Active Learning?

Some benefits of using active learning include reduced labeling costs, improved model performance, and more efficient use of resources. By allowing the model to request labels for the most uncertain or informative examples, active learning can significantly reduce the amount of labeled data required and make the model training process more efficient.

How can I evaluate the effectiveness of different Active Learning techniques?

To evaluate the effectiveness of different active learning techniques, consider the model performance, labeling cost, time required to train the model, and human annotator agreement. It is important to consider a combination of these factors when evaluating the effectiveness of different active learning techniques, as each technique may have different trade-offs in terms of these factors.