Its All About Dynamic Programming

Dynamic programming is a computer programming technique whose goal is to solve problems efficiently.So this article will teach you about elements,applications,approaches of dynamic programming.

The goal of data structure and algorithm is to organize the data in an optimized and efficient manner so that it can be retrieved easily. Data structure mainly uses three approaches to solve any problem or to find a quick solution to any problem. Those three approaches are: Divide & Conquer, Greedy Algorithm and Dynamic Programming

In Divide and Conquer methodology, the main problem is divided into sub-problems, and those sub-problems are further divided until we get a unitary subproblem that can be solved. After finding the solution to the unitary sub-problems and combining these solutions, the main problem can be solved.The greedy algorithm approach works the same as its name suggests. The algorithm always selects the solution with the least cost in every iteration. Dynamic programming is the methodology in which each problem is divided into sub-problem and solved; when the problem cannot be subdivided further, it is solved, and the solution is stored. The idea behind storing the solution is to reuse it, whenever the same problem function is encountered, instead of solving it again.

In this article, we will discuss dynamic programming, examples of dynamic programming, working of dynamic programming, approaches to solving a problem using dynamic programming, and applications of dynamic programming.

Table of contents

Best-suited IT & Software courses for you

Learn IT & Software with these high-rated online courses

Dynamic Programming

Dynamic programming(DP) is a computer programming technique whose goal is to solve problems efficiently. It solves a class of problems using overlapping subproblems, memoization, and optimal substructure property.

If any problem can be divided into subproblems and then further into smaller problems, it is the tenancy of dynamic programming to divide a large problem into the unit smaller problem and solve it. If the problems overlap [same problems occur multiple times], then the solution can be saved for future reference. Reusing solutions can enhance the CPU’s performance and takes less time to solve the problems, resulting in the most optimized and efficient solution.

DP is used to solve optimization problems. It guarantees to provide the optimal solution to the problem if the solution of the problem exists.

Example:

Let’s consider the Fibonacci Series up from 0 to 8. Fibonacci series is such a series in which the resulting number is the sum of the previous two numbers.

So, the series up to eight would be 0, 1, 1, 2, 3, 5, 8

In this, each number is the sum of two preceding numbers. This can be defined in a function as

F(n) = F(n-1) + F(n-2), where F(0) = 0 and F(1) = 1

Algorithm:

static int count =0

int fib (int n)

{

if memo[n]!= NULL

return memo (n)

count++;

if (n<0)

Error;

if (n==0)

return 0;

if (n==1)

return 1;

sum = fib (n-1) + fib (n-2)

Memo [n] = sum;

return sum;

}

Explanation:

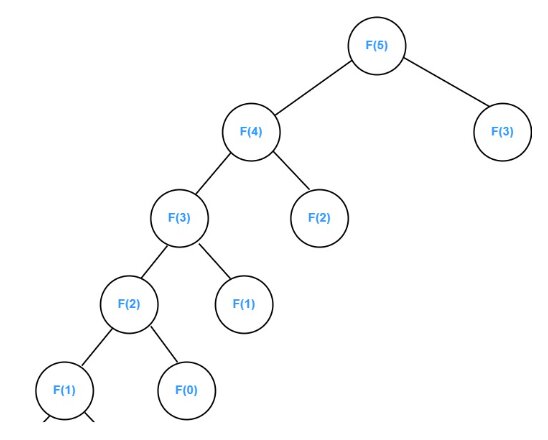

F(5) = F(4) + F(3) ……. Eq1

We have to find F(4) and F(3) using the above recursive function.

F(4) = F(3) + F(2) ………Eq 2

We have to find F(3) and F(2) using the above recursive function.

F(3) = F(2) + F(1) …….. Eq 3

We have to find F(2) and F(1) using the above recursive function.

F(2) = F(1) + F(0)………. Eq 4

Now, we have the value of F(1) and F( 0), which are 1 and 0, respectively.

Putting these values in Eq 4. We get,

F(2) = 0+1 = 1

Putting values of F(2) and F(1) in Eq 3

F(3) = 1 + 1 = 2

Putting values of F(3) and F(2) in Eq 2

F(4) = 2 + 1 = 3

Putting values of F(4) and F(3) in Eq 1

F(5) = 3 + 2 = 5

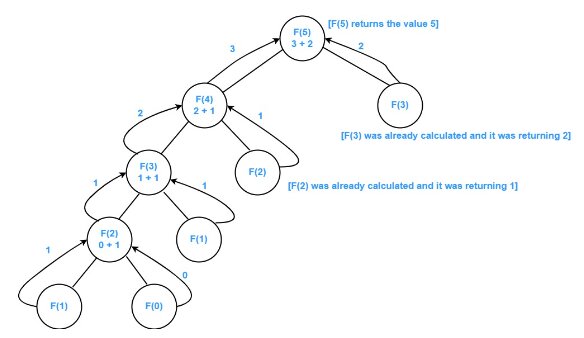

In the above image, we can see how

The Fibonacci function up to five is solved, and we have encountered a few functions repeatedly, like f(3) and f(2), so instead of calculating them again and again, we have saved the solution for the future and used it whenever required.

Working of Dynamic programming

The following steps are followed using DP to solve any problem:

- First of all, it breaks down one complex problem into similar subproblems.

- Then it finds the optimal solution to these subproblems.

- It stores the results of the subproblems. The process of storing the results is known as memoization.

- Memoization is used to reuse the solution of the subproblem so that the same problem does not need to be calculated repeatedly. This will optimize the system and make it efficient as well as fast.

- Finally, after calculating the solution to subproblems, we are able to solve the larger complex problem.

Elements of DP

Dynamic programming applies to those problems which have the following properties:

- Overlapping Sub-problems: The same problems repeatedly occur while solving complex problems.

- Optimal Substructure: The solution of a large or complex optimization problem can be obtained by combining the optimal solution of its sub-problems.

- Memoization: Memoization is the process of storing intermediate results so that these results can be used in the future.

Approaches in Dynamic Programming

There are two techniques to solve dynamic problems.

- Top-down approach: It makes use of the memoization technique. A problem is broken into subproblems in a top-down approach, and the solution to sub-problems is stored to solve the main problem.

- Bottom-up approach: It follows the tabulation method. Bottom-up approach work from subproblem to way up. This technique ensures that each subproblem is solved before the main problem.

Complexity

Space complexity may increase because we are storing intermediate results.

But, eventually, time complexity decreases as the problem solving becomes faster because of not solving similar problems repeatedly.

Applications of Dynamic Programming

- Dynamic programming is used to find the optimal solution to the problem.

- Longest Common Subsequence

- Matrix Chain Multiplication

- Job Scheduling

- Optimal Search solutions

Contributed by-Kanika Joshi